"Each remix was unique"

Lynn von Kurnatowski from the DLR has created a special algorithm in collaboration with HIDA Fellow Martin Hennecke: It generates a live remix of a piece of music from biometric data. We spoke with her about the interdisciplinary project work.

What was it about "The (Un)Answered Question" project that appealed to your department?

I think I can speak for everyone involved in our department that we were interested in the uniqueness of Martin's (Hennecke editor's note) project. It also appealed to us to bring together the two topics - art and science - and to work in an interdisciplinary way. After all, being able to bring aspects that we deal with in our daily work into a completely different context is fascinating. Which is why we were really pleased that this project actually materialized.

Our interview partner Lynn von Kurnatowski

Lynn von Kurnatowski

Lynn von Kurnatowski received her M.Sc. in Computer Science from Friedrich-Alexander University Erlangen-Nürnberg. Since 2018 she is a scientific researcher in the Intelligent and Distributed Systems group at the Institute for Software Technology at the German Aerospace Center (DLR). There she works on the systematical analysis of software repositories with the long-term goal of optimizing the general development process of software systems in a research context. In addition to software analytics, she focuses on the topic of human factors in software engineering. Qualitative and quantitative methods are used to understand the relations between human factors and software engineering phenomena.

In "The (Un)Answered Question," various body data of the audience and also of the performers were recorded - for example, heartbeat, facial expressions, etc. An intelligent algorithm collected these various data and processed them into video projections and a live orchestral remix of the original composition. How did you come up with this final idea?

The idea of involving the audience was there from the very beginning. That was important to Martin. The general idea at the beginning was to record data from the audience and process them into an orchestral remix of the original composition. In order to do that, there were certain aspects that needed to be resolved. For example: What data can we record at all?

Generally, you can record both subjective and objective data. For this project, we decided that we wanted to use a questionnaire to determine personality traits and combine them with the biometric data. For the objective data, we then had to establish the methods of procedure. When we conduct studies in the laboratory, there is only one person in a much-regulated environment. Furthermore, we can specifically address any needs that may arise. In addition, if something doesn’t work out, the study can be repeated.

In this case, that would not have been possible: We had 180 people sitting in the hall. We couldn't lay the cables all over the theater. So the question arises: Which sensors can we use at all to record the biometric data of the audience? After that was settled, the next question immediately came up: How do we now create sheet music from the data that we have collected?

Ultimately, we wanted to develop an intelligent algorithm that would automatically create a remix from the biometric data. Together with Martin, we had to work out: What is technically possible? And what is possible from an artistic point of view? That's when the collaboration really got going, where we started to think about things in concrete terms.

In the first meeting we realized that we had to find a common language first.

How was music generated from the algorithm? Was it working along the lines of: the heartbeat gets faster, so the rhythm of the song gets faster? Or how can one imagine that?

That's exactly the linear assignment we didn't want. It was important to us, that it wouldn’t be like: "Okay, the pulse influences the strings and the face recognition influences the trumpet." We wanted an intelligent mapping. That’s why we examined our everyday work and repurposed methodologies. We quickly came up with the idea that we cluster the data. Using the center of the cluster, we then manipulated certain aspects of the original composition. Of course, we were bound by certain limits, because the final piece still had to be playable for the orchestra. So it couldn't become infinitely fast, for example. Nevertheless, we were able to avoid a linear assignment and each remix was unique.

What challenges did you encounter in the project? How did you then solve them?

Our biggest challenge was, of course, the environment. We knew we were in a theater with 180 people. This was not a laboratory study; everything had to work to the letter. Before the premiere, we had a rehearsal performance in Dortmund at the Academy for Theater and Digitality with an audience of 15. The rehearsal performance worked quite well. But we had to scale it up to 180 people.

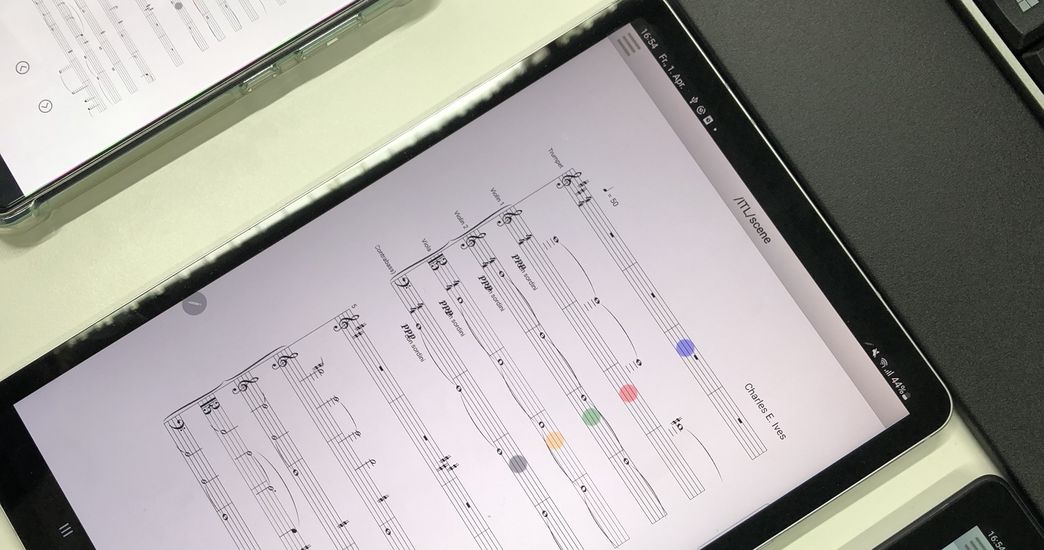

Initally, we wanted to have all the data collected by one computer and then evaluate it with a second one. We quickly realized that this would exceed the performance of the computers. We had to divide the data acquisition among several computers. For example, we used two computers for the data from the fitness trackers - one for the left and one for the right side of the auditorium. The second challenge, of course, was that we didn't have a lot of time to prepare the raw data and then generate the sheet music from it. It all had to be done in five minutes; so many things had to run in parallel.

About the project "The (Un)Answered Question"

The (Un)Answered Question

In collaboration with scientists from DLR and MDC, the composer and HIDA Fellow Martin Hennecke has devised a special application situation for data science: The (Un)answered Question. The experimental concert was premiered at the Saarland State Theatre on November 8, 2022. For this project, artificial intelligence composed various live remixes of a piece of classical music. Based on Ralph Waldo Emerson's poem "The Sphynx" and the piece "The Unanswered Question" by American composer Charles Ives, Martin Hennecke had previously

developed a prototype for the performance as part of his HIDA fellowship. At the premiere, the two interwoven works were modified through the use of artificial intelligence.

Crucial to the result was a variety of audience data – including pulse and facial expression, which were captured using fitness trackers and emotion recognition via image recognition software. An algorithm then processed this data into a live remix of the original composition, which was played by the orchestra in two versions (data from the left and right halves of the audience). The result was an immersive and exclusive live experience in the sold-out "Alte Feuerwache" of the State Theatre in Saarbrücken. The concert was followed by a panel discussion in which scientists from the Berlin Ultrahigh Field Facility of the MDC and the DLR Institute for Software Technology participated and reported on their involvement in the project.

You can read more about the concept and implementation of the project in this wiki.

Certainly, the interdisciplinary collaboration was also exciting. What were interesting points in the collaboration with the artists?

In the first meeting we realized that we had to find a common language first. For example, we had regular meetings with Martin in which we talked about the project as a team and discussions arose. Sometimes he had to say, "If you feel that way, I think it will be all right. I will trust you." Just as the other way around, it happened that he used technical terms from art and music that we didn't know. That was funny.

The collaboration with the orchestra was also exciting. We didn't know how the musicians would react. The conductor told us that they normally receive the sheet music four weeks before the performance in order to prepare. In our project, they received the new sheet music directly on the tablet and had to play it immediately. This was very interesting to witness during the performance,

You now also want to publish a scientific paper about this project. What can other scientists learn from your experience?

There are more and more tracks - also at conferences - that deal with software engineering in society, which address precisely this interdisciplinary aspect that we experienced in the project. In addition, we have taken a lot from this project for our own work - especially regarding working with biometric data.

Finally, a question about the future: What other art form would you like to transform with data science now?

I think music, especially orchestral music, still offers great potential and is an exciting area. That's why I wouldn't even say what other art form. I think it's more interesting to stick with it and expand on that. Maybe by adding more biometrics or more components. We're still at the beginning, so there's still a lot we can do.